May 2012, Vol. 239 No. 5

Features

Decisions Hastened By Linking Real-time Data And Models

One of the most challenging aspects of the oil and gas pipeline industry for this writer is its collective failure to embrace the technological advance of virtually every other industry – the use of an integrated data environment and real-time analytics to govern day-to-day operations.

In fact, little new analytical insight has been introduced across the energy industry since the 1960s, while most other data intensive industries – retail, banking, and manufacturing, to name but a few – acquire, transmit, store, process, and quality control, interpret, and archive data. They have adopted the mindset of data as an asset rather than the data is a cost mentality, and have realized greater shareholder value as a result. In fact, most companies in other industries have found that the easiest and least expensive way to add shareholder value is through analytics.

The integration and pervasive use of operational data in the oil and gas pipeline industry makes sense. Today, engineering and operations have to deal with time-consuming and manual search processes involving multiple applications, to answer even the most basic operational questions. By integrating already available information from within the company, the time to decision is cut dramatically, with no loss in decision quality.

To get to the desired state, the industry must adopt a data management-centric approach to the flow of information and adopt a new way of thinking about the business. Consider the situation today: company data is kept in siloes, and copied and manipulated to meet immediate needs; Supervisory Control and Data Acquisition (SCADA) is not integrated with other key data elements. And, there’s no direct link between real-time data and engineering or economic models. Pipeline companies today struggle under these limitations and the constraints on performance imposed by this lack of integrated information. They risk time-consuming and expensive failures stemming from the lack of real-time information.

Now, consider the newest initiatives in a digital operations approach: from the reservoir to the point of sale, workflows for asset management are integrated; data is available continuously and in real time; with a robust data and information management process. In this scenario, the company has the ability to run real-time 24-hour operations and collaboration centers. Further, the company gains a portfolio view of its entire value chain and across the whole lifecycle of each asset.

Pipeline companies today do not take an integrated data environment approach, instead relying on multiple operational data stores or historians. In this scenario, there is no logical data-model applied to the source data structure, performance constraints further slow the use of data in decision-making, there is no real-time capability in the flow of information and in many instances engineers are not allowed to query the data directly.

By contrast, a digital integrated operations approach means establishing a business-owned data management program with policies, rules and tools to ensure high quality of information – a single trusted source. It also means that data is gathered in real time, there is a logical structure of the data, and once it is loaded into the integrated data environment, it can be used over and over again.

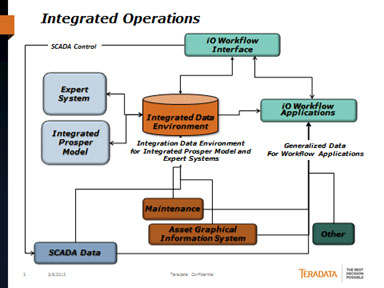

Properly structured, the integrated data environment can facilitate full analysis of the data. Any question can be asked of the data, whether it was anticipated or not, without having to drastically manipulate the data. At a high level, the data flow architecture looks like Figure 1.

- The function of the Integrated Operations Workflow Interface is workflow visualization and human interface.

- The function of the Integrated Operations Workflow Applications is specific visualization and analysis.

- These applications will source data directly from data sources and the Integrated Data Environment.

- The function of the Integrated Data Environment is logical integration of data required for high performance automation of the Integrated Production (Prosper) Model and Expert Systems workflows and other ad-hoc or automated numerical analytics.

- The Integrated Data Environment does only contain the subset of source data required to fulfill its function.

- The Integrated Data Environment can also be involved in the SCADA control loop.

Figure 1.

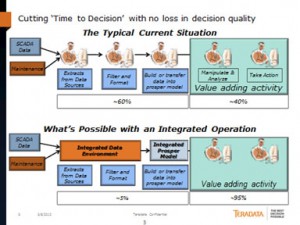

As one can see in Figure 2, engineers and scientists working in companies where data is siloed spend, on average, 14 work days out of every month searching for data, integrating it from multiple sources, and preparing it for analysis in applications, leaving only nine work days for analysis that can inform decisions and drive actions. When a company eliminates the manual data processes by moving to an integrated data environment, no time is wasted in the manual manipulation of data. Engineers and data scientists gain 13 additional days every month for value-adding activities regarding operational or project decisions.

Figure 2.

Adopting data management technology potentially has an enormous impact. Centralizing integrated production model and expert systems information in an integration data environment that can handle the information requirements of the industry means the information is stored once and used repeatedly by engineers and scientists to run their different engineering and economic models. Daily productivity of both is raised to the tune of more than 150 additional value-added work days per year per person. It’s easy to view data as an asset that can be used to speed the time to decision and build company equity. It’s time for the industry to make the moves that other industries have taken to use data management best practices for integrated operations.

Author

Glen Sartain is the director for oil and gas and high-tech consulting at Teradata Corporation. He can be reached at 609-433-1715 or D’Anne.hotchkiss@teradata.com.

Comments